|

Elon Musk's X, the social media site formerly known as Twitter, is selling user accounts that are no longer in use for the price of $50,000 and higher. According to Forbes, a team within the company, known as the @Handle Team, has started to work on a handle marketplace to sell the account names left unused by people who originally registered them. Notably, this comes months after Mr Musk unveiled a plan to implement such a program in the near future.

Now, Forbes has uncovered emails indicating that the @Handle Team is actively working to sell disused user handles. The outlet reported that X has already sent solicitations to potential buyers, requesting a fixed fee of $50,000 to initiate the account purchase. These emails were sent by active X employees, who mentioned that the company had made recent updates to its @Handle guidelines, procedures and fees. Notably, after his purchase of the micro-blogging site, Mr Musk had hinted towards his plans to sell the old usernames. "Vast number" of handles had been taken by "bots and trolls", he tweeted days after his Twitter acquisition. In January this year, several reports then suggested that the billionaire was planning to free up as many as 1.5 billion usernames. In May, X had already begun the process of removing inactive accounts from its platform. Forbes reported that on Friday evening, X's username registration policy still stated that "unfortunately, we cannot release inactive usernames at this time". Its "inactive account policy," on the other hand, warned users to log in every 30 days to avoid being considered inactive. But it also said X was not currently releasing inactive usernames. Meanwhile, Elon Musk has indicated his desire to transform X into an "everything app". In a post, the billionaire said that the rebranded platform would be expanded to offer "comprehensive communications and the ability to conduct your entire financial world". In a recent internal meeting, Mr Musk also dropped a hint at an unlikely new feature for the micro-blogging site - dating. Musk said a person's X posts can be "the biggest indicator" of whether they are someone you'd want to hire. "I think the same is true also on the romantic front. Finding someone on the platform. Obviously, I found someone and friends of mine have found people on the platform. And you can tell if you're a good match based on what they write," he added.

0 Comments

One of the defining features of Musk, aside from his penchant for X is his self-branding as a genius trying to push humanity forward. In reality, many of his projects, like the Vegas Loop, which is a passenger car tunnel in Las Vegas meant to reduce congestion instead of utilizing public transportation, are abjectly stupid. There are also many misconceptions that he circulates to reinforce the common narrative of his sycophants, like that he founded Tesla, the electric carmaker. But he didn’t create the company, he bought it and later called himself the “founder,” which led to a legal battle that allowed him to keep the title despite not actually being what the English language would define as a founder. A local news report from the San Francisco Bay area on this issue also describes how he pulled the same thing with PayPal, which was the main product of Confinity, a startup that had emerged with Musk’s X.com in the 1990s. If simply being an early investor in a company would define one as a founder, then every person who pays US taxes could be considered a Tesla founder because of the large amounts of federal contracts that prop the EV maker up.

Musk shouldered his way into the prestige of being a founder of some of these companies in order to boost his own image. It gives a serious amount of clout in the tech bro environment to call oneself a founder. Therefore, if history is any guide, the plan appears to be this: Elon Musk’s Twitter rebranding and the introduction of new features is designed so that he can label himself the company’s founder. It’s not about any grand corporate strategy or serious attempt at becoming the app of power; he just wants to take credit for Jack Dorsey’s product while blatantly ripping IP from China. Elon Musk’s lawyers say Mark Zuckerberg's rival platform uses stolen Trade secrets

According to EU code, tech platforms like Twitter are connected with “fact-checkers, civil society, and third-party organizations with specific expertise on disinformation.” In other words, avid gatekeepers of the establishment narrative. And on August 25th, adherence will no longer be voluntary.

The EU should consider getting out of the control freak business if it truly wants to help the European free press. Maybe then, journalists here in Europe trying our best to fully inform our audiences against information barriers created by Brussels won’t have to redirect our internet connections to places like Vietnam, Mexico, Turkey, or Brazil in order to access information and sources that the EU doesn’t like. Elon Musk has announced that starting April 15, only verified Twitter accounts will be eligible to be featured on the platform’s recommendation timeline. The tech mogul explained the move in a Twitter post on Monday, stating it’s “the only realistic way to address advanced AI bot swarms taking over.”

Apart from no longer being featured in other users’ ‘For You’ feeds, unverified accounts – those that have not paid the $7 monthly fee to have their account verified with a blue checkmark – will also lose the ability to vote in polls. Musk again explained the decision by pointing to the prevalence of bots on the platform. It is unclear, however, if Musk was only referring to polls created by Twitter and himself – as he often gauges public opinion on key decisions through this tool – or all polls on the platform. Twitter announced last week that it would remove the verified status of some ‘legacy’ accounts by April 1, meaning only those paying a monthly subscription will now have the blue checkmark in their profiles. According to analysts from Sensor Tower, Twitter currently has an estimated paying user base of just over 385,000 mobile subscribers worldwide on both iOS and Android. Critics of Musk’s algorithm change say it will significantly hinder the recommendation timeline’s relevance, as it will essentially prevent regular people from reaching a wider audience and only feature paying users, brands, and accounts of officials. Meanwhile, Twitter has been dealing with a source code leak, after an unknown hacker or group of people posted the code on GitHub – a software collaboration platform. Twitter has filed a court petition seeking to identify those responsible for the leak, arguing that the code, which underpins the website’s entire operation, could expose security vulnerabilities. Musk bought Twitter for $44 billion in late October 2022. After appointing himself CEO and vowing to transform the site into a free speech platform, the billionaire fired nearly three-quarters of Twitter’s workforce, removed some of its more contentious censorship policies, and restored a number of banned accounts, including that of former US President Donald Trump. However, he has yet to make the company profitable, as its value has decline by one half since the takeover, according to the Wall Street Journal, despite cutting the workforce and implementing a subscription model. Twitter owner Elon Musk has confirmed he will stand by his promise to resign as the company’s chief executive, after the platform’s users voted for him to step down, but suggested that finding a successor may take some time.

“I will resign as CEO as soon as I find someone foolish enough to take the job! After that, I will just run the software & servers teams,” Musk announced in a tweet on Tuesday. CNBC previously reported that Musk was “actively looking, asking, trying to figure out who the candidate pool might actually be.” On Sunday, the billionaire posted an informal poll asking Twitter users if he should step down as head of the company. Some 57.5% of the 17 million respondents voted for Musk to leave his post. On Monday, Musk stated that henceforth only Twitter Blue subscribers will be able to voice their opinions in polls about policy changes on the platform. After completing his $44 billion deal to buy Twitter, Musk became its majority owner, which means that no one can force him out. However, in recent weeks, the CEO has introduced a number of controversial changes that have caused a massive public backlash. At the same time, the self-styled “free speech absolutist” authorized the release of internal documents in an effort to provide transparency about Twitter’s past decision-making. Former Twitter CEO Jack Dorsey has issued a response to the recently leaked ‘Twitter Files’, admitting to a host of major mistakes during his time as chief executive, while warning against “centralized control” of the internet by governments and corporations. The tech entrepreneur addressed the leaked internal documents in a Tuesday blog post, acknowledging that Twitter failed to uphold its guiding principles near the end of his tenure, and that he “completely gave up” on his own vision for the site after “activist” investors bought into the company sometime in 2020. Though he did not elaborate on the new investors or how they might have swayed the platform, Dorsey said Twitter’s focus on controlling public dialogue was ultimately one of its greatest errors. He cited the decision to permanently suspend President Donald Trump in the wake of the riot at the US Capitol in January 2021, saying it was “the right thing for the public company business at the time, but the wrong thing for the internet and society.”“The biggest mistake I made was continuing to invest in building tools for us to manage the public conversation, versus building tools for the people using Twitter to easily manage it for themselves,” he said. “This burdened the company with too much power, and opened us to significant outside pressure (such as advertising budgets). I generally think companies have become far too powerful, and that became completely clear to me with our suspension of Trump’s account.”

Dorsey was reportedly on vacation at the time of the Trump ban and had delegated decision-making to executives Yoel Roth and Vijaya Gadde – as revealed in the Twitter Files – but nonetheless said he bears sole blame for the errors in judgment. “The current attacks on my former colleagues could be dangerous and [don’t] solve anything. If you want to blame, direct it at me and my actions, or lack thereof,” he continued, also insisting “there was no ill intent or hidden agendas” behind Twitter’s more contentious decisions and that the company “acted according to the best information we had at the time.” Tuesday’s blog post also included a lengthy section warning of “centralized” power over the internet, stating that social media platforms should remain “resilient to corporate and government control” while suggesting Twitter had failed to do so under his watch. The former CEO, who stepped down from the role in November 2021, went on to say that he welcomes the “fresh reset” brought by Twitter’s new owner, billionaire entrepreneur Elon Musk, expressing hopes that the site will become “uncomfortably transparent.” However, he argued that the Twitter Files should have been released “Wikileaks-style,” or in full, rather than being leaked to select journalists by the site’s new management. Spearheaded by reporters Matt Taibbi and Bari Weiss, the documents have been published on a rolling basis with Musk’s blessing, shedding light on a number of controversial decisions made by the company. Five installments have gone public so far, including material surrounding Trump’s suspension, Twitter’s close cooperation with US intelligence agencies, the practice of shadow banning, as well as a site-wide ban on a New York Post report about the foreign business dealings of Hunter Biden, the son of President Joe Biden. Twitter boss Elon Musk has vowed to extend a “general amnesty” to an unspecified number of suspended users, a week after reversing former US President Donald Trump’s lifetime ban from the platform.

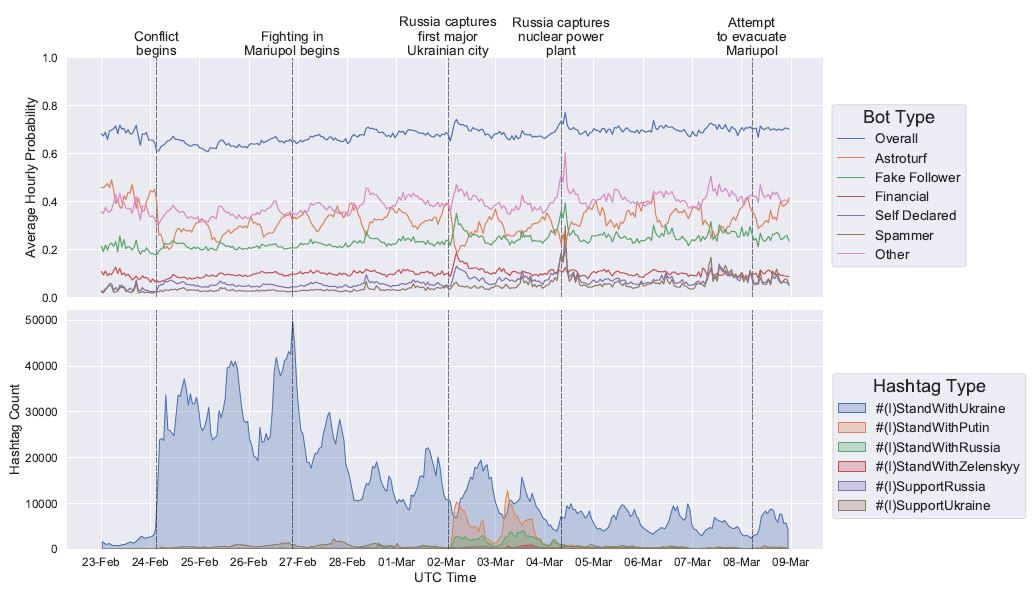

“The people have spoken. Amnesty begins next week,” Musk tweeted on Thanksgiving Day. He added “Vox Populi, Vox Dei,” a Latin phrase that means “the voice of the people is the voice of God.” The SpaceX and Tesla CEO launched a Twitter poll on Wednesday, asking if Twitter should “offer a general amnesty to suspended accounts, provided that they have not broken the law or engaged in egregious spam?” Out of more than 3.1 million users who took part, 72.4% voted ‘yes’ and 27.6% voted ‘no’. In a separate message Musk also promised to start freeing and offering up for grabs “vast numbers of handles” that had previously been “consumed” by bots and trolls. Since acquiring Twitter for $44 billion last month, Musk has faced growing criticism for laying off hundreds of employees and reversing the permanent suspensions of multiple notable accounts, including former US President Donald Trump following a similar public vote. While critics claimed that Musk’s actions fuel hate speech, harassment and misinformation, he has rejected accusations he was some kind of “right-wing bogeyman” and insisted that Twitter under his ownership has not banned any leftists, not even for “utter lies.” It remains to be seen how many users would be eligible for amnesty. This week the platform already reinstated Rep. Marjorie Taylor Greene, whose personal Twitter account had been permanently banned since early January 2022 for posting “misinformation” about the Covid-19 pandemic. Musk, however, drew the line at Alex Jones, saying he had “no mercy” for someone who used children’s deaths for clout. Musk had vowed to transform the platform and turn it into a bastion of free speech, saying it was “important to the future of civilization” to have a digital town square where a wide range of beliefs could be discussed. Researchers from the University of Adelaide have found bots have had a major online presence during the war between Russia and Ukraine. The researchers analysed 5,203,764 tweets, retweets, quote tweets and replies posted to Twitter between 23 February 2022, and 8 March 2022, containing the hashtags #(I)StandWithPutin, #(I)StandWithRussia, #(I)SupportRussia, #(I)StandWithUkraine, #(I)StandWithZelenskyy and #(I)SupportUkraine. “We found that between 60 and 80 per cent of tweets using the hashtags we studied came from bot accounts during the first two weeks of the war,” said co-lead researcher Joshua Watt, an MPhil candidate in Applied Mathematics and Statistics from the University of Adelaide’s School of Mathematical Sciences. “This drove more angst in the online discourse and even impacted discussions surrounding people’s decision to flee or stay in Ukraine. “We observed increases in words such as ‘shame’, ‘terrorist’, ‘threat’, and ‘panic’. “Pro-Russian human accounts were having the largest influence on discussions of the war – particularly on accounts which were pro-Ukraine. “To our knowledge, this is the first body of published work which addresses online influence operations in the context of Russia’s invasion of Ukraine in 2022. “In the past, wars have been primarily fought physically, with armies, air force and navy operations being the primary forms of combat. “However, social media has created a new environment where public opinion can be manipulated at a very large scale. As a result, these environments can be used to manipulate discussion, as well as cause disruption and overall public distrust.” “In the past, wars have been primarily fought physically, with armies, air force and navy operations being the primary forms of combat. However, social media has created a new environment where public opinion can be manipulated at a very large scale." Fellow co-lead researcher, Bridget Smart, a Masters student in Applied Mathematics and Statistics, added: “Our research identifies that this is happening during the Russia-Ukraine war and provides a statistical framework which quantifies the extent to which this is happening. “This work extends and combines existing techniques to quantify how bots are influencing people in the online conversation around the Russia-Ukraine invasion.

“It opens up avenues for researchers to understand quantitatively how these malicious campaigns operate, and what makes them impactful. This research has identified that social media organisations may need to be better equipped for detecting and handling the use of bots on their networks. “It has identified that governments may need to have stricter policies on social media organisations, and that social media can be a vital tool during conflict.” The paper titled “#IStandWithPutin versus #IStandWithUkraine: The interaction of bots and humans in discussion of the Russia/Ukraine war” has been published in arXiv and will be presented at The International Conference on Social Informatics in Glasgow from 19-21 October. Elon Musk has said he is terminating a $44bn deal to buy Twitter, saying the social media company did not provide information about fake or spam accounts on the platform. In a filing to the Security and Exchange Commission (SEC) on Friday, Musk’s lawyers said Twitter had failed or refused to respond to multiple requests for information on those accounts, which is fundamental to the company’s business performance.

“Sometimes Twitter has ignored Mr. Musk’s requests, sometimes it has rejected them for reasons that appear to be unjustified, and sometimes it has claimed to comply while giving Mr. Musk incomplete or unusable information,” the filing reads. “Twitter is in material breach of multiple provisions of that Agreement, appears to have made false and misleading representations upon which Mr. Musk relied when entering into the Merger Agreement,” it also said. Twitter did not immediately respond to requests for comment from The Associated Press and Reuters news agencies. The company’s chairman, Bret Taylor, tweeted on Friday evening that, “the Twitter Board is committed to closing the transaction on the price and terms agreed upon with Mr. Musk and plans to pursue legal action to enforce the merger agreement”. The terms of the deal require Musk, the CEO of Tesla, to pay a $1bn break-up fee if he does not complete the transaction. Linda Kim  SAN FRANCISCO, August 20 -- Twitter and Facebook said on Monday (Aug 19) they had dismantled a state-backed information operation originating in mainland China that sought to undermine protests in Hong Kong. Twitter said it suspended 936 accounts and the operations appeared to be a coordinated state-backed effort originating in China. It said these accounts were just the most active portions of this campaign and that a "larger, spammy network" of approximately 200,000 accounts had been proactively suspended before they were substantially active. Facebook said it had removed accounts and pages from a small network after a tip from Twitter. It said that its investigation found links to individuals associated with the Chinese government. Social media companies are under pressure to stem illicit political influence campaigns online ahead of the US election in November 2020. A 22-month US investigation concluded Russia interfered in a "sweeping and systematic fashion" in the 2016 US election to help Donald Trump win the presidency. The Chinese embassy in Washington and the US State Department were not immediately available to comment. The Hong Kong protests, which have presented one of the biggest challenges for Chinese President Xi Jinping since he came to power in 2012, began in June as opposition to a now-suspended bill that would allow suspects to be extradited to mainland China for trial in Communist Party-controlled courts. They have since swelled into wider calls for democracy. Twitter in a blog post said the accounts undermined the legitimacy and political positions of the protest movement in Hong Kong. Examples of posts provided by Twitter included a tweet from a user with photos of protesters storming Hong Kong's Legislative Council building, which asked: "Are these people who smashed the Legco crazy or taking benefits from the bad guys? It's a complete violent behavior, we don't want you radical people in Hong Kong. Just get out of here!" In examples provided by Facebook, one post called the protesters "Hong Kong cockroaches" and claimed that they"refused to show their faces". In a separate statement, Twitter said it was updating its advertising policy and would not accept advertising from state-controlled news media entities going forward. Twitter told Reuters the advertising change was not related to the suspended accounts. In the past week, China’s official Xinhua news agency and state broadcaster China Global Television Network (CGTN) paid to promote videos that portrayed the protests as violent and said Hong Kong citizens wanted the demonstrations to end, according to Twitter’s Ads Transparency Centre. Twitter said it did not have data on how much revenue it generates from state-controlled media advertising. Many countries including the United States do not have clear standards on state media’s purchase of online advertising. Total digital ad spending in Hong Kong will grow 11 per cent to reach US$786.1 million in 2019, according to projections by US digital market data analyst eMarketer. Alphabet's YouTube video service told Reuters in June that state-owned media companies maintained the same privileges as any other user, including the ability to run ads in accordance with its rules. YouTube did not immediately respond to a request for comment on Monday on whether it had detected inauthentic content related to protests in Hong Kong. In a tweet on Sunday, China’s influential state-run tabloid, The Global Times, hailed the response of Chinese “netizens” to the protests, saying: “Chinese netizens’ power! Amid escalating protests in Hong Kong, Chinese netizens on Saturday swept Facebook and Instagram to denounce secessionist posts and show support for Hong Kong police.” About 98 per cent of social network users in Hong Kong, or 4.7 million people, will log into Facebook at least once a month in 2019, according to eMarketer projections, while 9.4 per cent of social network users will use Twitter.  LONDON, July 10 -- It’s the F1 British Grand Prix at Silverstone this weekend which means many from London and the south-east will be headed up the M1, M40, A5 or taking a “quick route only we know” over the coming days to take in the spectacle in Northamptonshire. Doubtless many of them will be followers of current F1 World Champion Lewis Hamilton. But, how many of them actually “follow” Hamilton on Twitter? Research carried out by private number plates company Click4reg.co.uk suggests the figure is not as big as it might appear. The research concludes that 34.3% of Hamilton’s followers are, to use a currently popular word – fake! Even allowing for these figures, Hamilton still has the biggest following among F1 drivers who have a personal Twitter account (two – Kimi Räikkönen and Sebastian Vettel – don’t have one). The research showed that:

Last November, Instagram cracked down on celebrities and influencers with followers who aren’t genuine. This ‘purge’ reduced significant numbers of fake, inactive, spam, bots, or as often discovered – bought – followers. Why buy followers? Users may do so to appear more influential, to harness more media and therefore commercial attention, among other reasons. But Instagram isn’t the only social platform faced with this issue. Twitter has battled the problem of bots and spam accounts for many years. So, with the British Grand Prix as its cue, Click4reg wanted to discover how many followers of the 20 competing F1 drivers are fake. Author: Lora Smith  RIYADH, July 4 -- Rap star Nicki Minaj will perform in Saudia Arabia later this month, organisers of the Jeddah Season cultural festival announced, as the ultraconservative kingdom tries to shed decades of restrictions on entertainment. Saudi organisers said at a press conference Wednesday that Minaj, whose real name is Onika Tanya Maraj, will headline the festival on July 18. Minaj is known for her outlandish performances and flamboyant, hyper-sexualised image. Her music videos often feature figure-hugging, skin-bearing outfits and provocative dancing. Her lyrics are laced with profanities and Minaj's songs mainly address sex, rumoured liaisons with other rappers and female empowerment. Her famously large posterior is regularly referenced, as is a play on words between her surname and a French term for group sex. The concert, in line with Saudi laws, will be alcohol and drug-free, open to people 16 and older and will take place at the King Abdullah Sports Stadium in the Red Sea city. Saudi Arabia is promising quick electronic visas for international visitors who want to attend. Local media reported the headline act, to be televised on MTV, will also feature British musician Liam Payne and American DJ Steve Aoki. While the announcements for Payne and Aoki posted on the Jeddah Season Twitter account featured photos of the artists, Minaj's announcement ran with only an animated image of her name. Her image was used at the press conference on Wednesday, however. All her songs are indecent The announcement triggered a storm on social media, ranging from joy to criticism and disappointment. One fan posted a video of Minaj performing with the caption: "Am I dreaming or is this really happening?", while several others posted doctored images of the rapper in traditional Saudi dress. In a profanity-filled video posted on Twitter that has been viewed more than 37,000 times, a Saudi woman wearing a loose headscarf accused the Saudi government of hypocrisy for inviting Minaj to perform but requiring women who attend the concert to wear the modest full-length robe known as the abaya. "She's going to go and shake her ass and all her songs are indecent and about sex and shaking ass and then you tell me to wear the abaya," the Saudi woman said. "What the hell?" Minaj is yet to comment on her headline slot. This is not her first performance in the Middle East, having previously performed in the United Arab Emirates in 2013. In 2015, Minaj performed at a Christmas event in Angola despite calls from a human rights group to abandon the concert. The Human Rights Foundation said the money to pay Minaj came from "government corruption and human rights violations". Author: Linda Lim  ROTTERDAM, March 11 -- Only a fool would cheer the banning of Tommy Robinson by Facebook and Instagram. It doesn’t matter if you like or loathe him. It doesn’t matter if you think he’s a searing critic of the divisive logic in the politics of diversity or Luton’s very own Oswald Mosley in Jack Wills clobber. The point is that his expulsion from social media confirms that corporate censorship is out of control. It speaks to a new kind of tyranny: the tyranny of unaccountable capitalist oligarchs in Silicon Valley getting to decide who is allowed to speak in the new public square that is the internet. Robinson, having already been thrown off Twitter and Patreon, was unceremoniously cast out from Facebook and Instagram yesterday. He had one million followers. So we are not talking about some bedroom-bound imbecile who says mad things to 27 fellow losers on Twitter, but about a public figure, someone who commands an audience and enjoys political influence. His crime, in the eyes of Facebook’s and Instagram’s community-standards cops, was to nurture ‘organised hate’ towards Muslims. He used ‘dehumanising language’ and made ‘calls for violence’, the social-media giants decreed. And therefore he had to go. There are many disturbing things about this latest act of Silicon Valley silencing of an awkward public voice. The first is the apparent involvement of Mohammed Shafiq, CEO of the Ramadhan Foundation. Yesterday Shafiq boasted about having met with Facebook representatives to encourage them to ban Robinson over his ‘brainwashing’ of his followers into feeling ‘racism’ towards Muslims. This is the same Mohammed Shafiq who once attended an event with Hassan Haseeb ur Rehman, a Pakistani cleric who praised the murder in 2011 of the governor of Punjab, Salman Taseer, by a radical Islamist who despised Taseer for his opposition to Pakistan’s blasphemy laws and his calls for the Christian ‘blasphemer’, Asia Bibi, to be released from jail. Shafiq also called for the anti-extremist campaigner Maajid Nawaz to be dumped as a parliamentary candidate for the Liberal Democrats after Nawaz committed the speechcrime of tweeting a cartoon of Jesus & Mo. All of which raises a question: why is Facebook reportedly taking advice from someone like that? Does it also meet with campaigning Christians and jot down which critics of Christ they would like to see removed from its website? The other disturbing thing is the growing power of internet corporations to police public speech. Not content with banning the likes of Alex Jones and Milo Yiannopoulos, and feminists like Meghan Murphy who criticise the politics of transgenderism, and various white nationalists, now the social-media giants are going after right-wing political activists whose views they don’t like. Alongside Robinson it has been reported that some leading UKIP activists have had their Facebook accounts suspended. This has a very strong whiff of political censorship. It is perverse and dispiriting to see ostensible leftists and progressives whoop with delight as corporate tech giants suspend or censor political undesirables. First because since when was the left in favour of the exercise of property rights against people’s rights? In their applauding of Silicon Valley’s censorious rampage these left-wing anti-fascists sound an awful lot like right-wing libertarians. They are effectively saying, ‘Hey, these are private companies, so they can ban whoever they want to’. Suddenly their traditional concern with reining in the unaccountable power of big business goes out the window and they find themselves standing with the bosses against the individual.

For example, a message asking people to "share and watch" a clip could be a spambot. But it could also be a protester -- maybe one like those in Ferguson, Mo. -- asking people to spread awareness about a crime. "We don't want to gamble on potentially silencing that crucial speech by classifying it as spam and suspending it," she said. "That means we evaluate hundreds of parameters when looking at account behaviors, and even then, we can still get it wrong and have to reevaluate." (The full talk is 10 minutes long, but lays out the basics of Twitter's philosophy pretty well.) Even with graphic, disturbing and violent images, Harvey said, there are a lot of gray areas. And, as my Switch colleague Brian Fung pointed out, there is no industry standard. Each company makes its own rules based on how it wants to shape its community. Facebook and Instagram, for example, have rules against nudity in photos. Twitter has no such rules -- a decision that it's made as a company to live by its assertion that, in nearly all cases, the "tweets must flow."

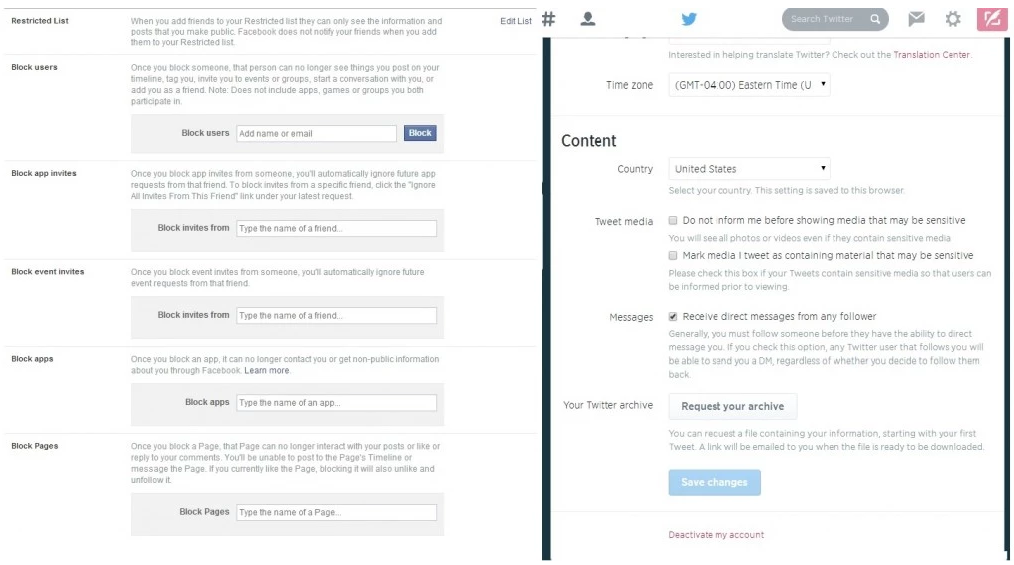

While companies continue to debate what they can and should do at the administrative level to stop certain kinds of images, there are some things that individual users can do to take action on their own accounts. On Facebook, for example, you can block users, apps or pages if you don't want to see the content they publish. Twitter offers users the option to change their media settings themselves. Here, if users want to see images that could be considered "sensitive" and skip seeing Twitter's warning message, they can opt to do so. Users can also decide to mark their own media as sensitive by default, if they think their pictures could upset others. |

Thank you for choosing to make a difference through your donation. We appreciate your support.

This website uses marketing and tracking technologies. Opting out of this will opt you out of all cookies, except for those needed to run the website. Note that some products may not work as well without tracking cookies. Opt Out of CookiesCategories

All

Archives

April 2024

|

RSS Feed

RSS Feed