|

The world could encounter major disasters before the use of Artificial Intelligence (AI) weapons is regulated in a proper manner, according to Turing Award-winning scientist Geoffrey Hinton, seen as a pioneer of the technology.

The former Google engineer, who quit the company last year, compared the use of the technology for military purposes to chemical weapons deployment – warning that “very nasty things” will occur before the global community arrives at a comprehensive agreement comparable to the Geneva Conventions. “The threat I spoke out about is the existential threat,” Professor Hinton said on Tuesday in an interview with Irish broadcaster RTE News, emphasising that “these things will get much more intelligent than us and they will take over.” The computer scientist highlighted the impact of AI on disinformation and job displacement, and also on weapons of the future. “One of the threats is ‘battle robots’ which will make it much easier for rich countries to wage war on smaller, poorer countries and they are going to be very nasty and I think they are inevitably coming,” Hinton warned. He urged governments to put pressure on tech majors, especially in California, to conduct in-depth research on the safety of AI technology. “Rather than it being an afterthought, there should be government incentives to ensure companies put a lot of work into safety and some of that is happening now,” Hinton said. The scientist also highlighted huge benefits that AI can bring to humanity, particularly in healthcare, adding that he does not regret any of his contributions to the technology. Despite the mounting interest in AI, several high-profile figures in the tech industry have warned of the potential dangers posed by the unregulated adoption of the technology. Hinton, who quit Google last year, has waged a media campaign to warn of the risks. Tesla CEO Elon Musk, Apple co-founder Steve Wozniak and Yoshua Bengio, who is considered an AI pioneer for his work on neural networks, were among the top industry figures to co-sign a letter last year calling for aggressive regulation of the AI sector.

0 Comments

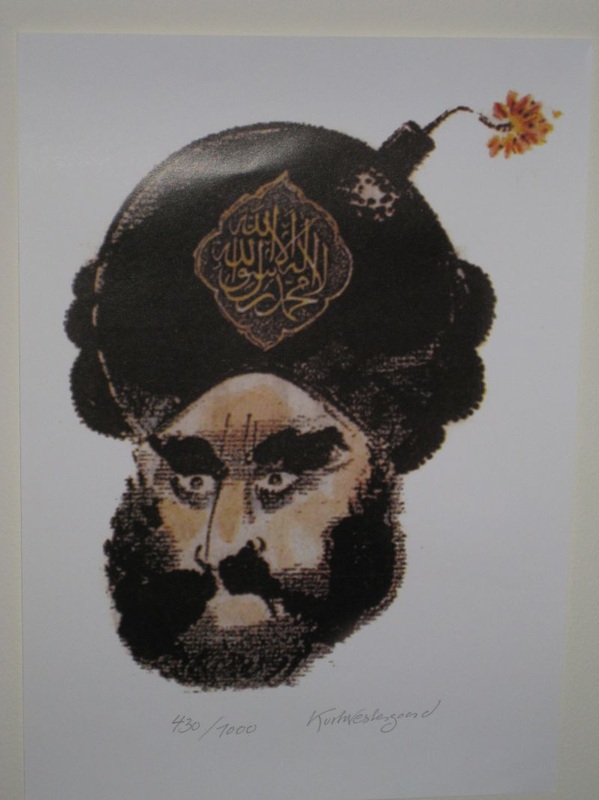

AI fakery is quickly becoming one of the biggest problems confronting us online. Deceptive pictures, videos and audio are proliferating as a result of the rise and misuse of generative artificial intelligence tools.

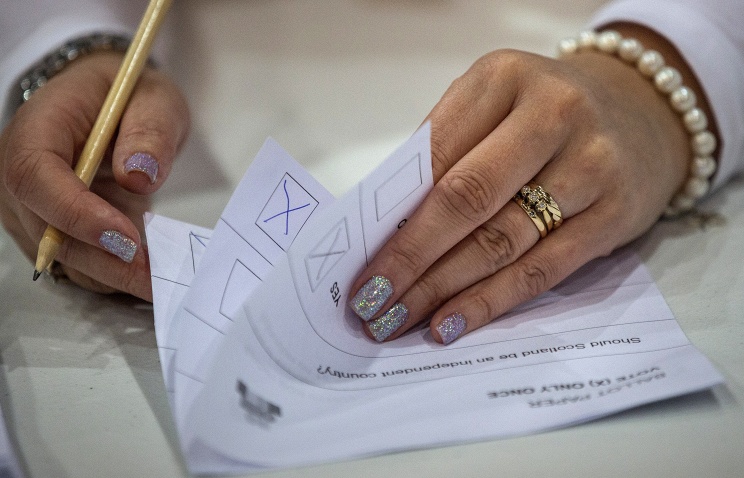

With AI deepfakes cropping up almost every day, depicting everyone from Taylor Swift to Donald Trump, it's getting harder to tell what's real from what's not. Video and image generators like DALL-E, Midjourney and OpenAI’s Sora make it easy for people without any technical skills to create deepfakes -- just type a request and the system spits it out. These fake images might seem harmless. But they can be used to carry out scams and identity theft or propaganda and election manipulation. Here is how to avoid being duped by deepfakes: How to Spot Deepfake In the early days of deepfakes, the technology was far from perfect and often left telltale signs of manipulation. Fact-checkers have pointed out images with obvious errors, like hands with six fingers or eyeglasses that have differently shaped lenses. But as AI has improved, it has become a lot harder. Some widely shared advice -- such as looking for unnatural blinking patterns among people in deepfake videos -- no longer holds, said Henry Ajder, founder of consulting firm Latent Space Advisory and a leading expert in generative AI. Still, there are some things to look for, he said. A lot of AI deepfake photos, especially of people, have an electronic sheen to them, “an aesthetic sort of smoothing effect” that leaves skin “looking incredibly polished,” Ajder said. He warned, however, that creative prompting can sometimes eliminate this and many other signs of AI manipulation. Check the consistency of shadows and lighting. Often the subject is in clear focus and appears convincingly lifelike but elements in the backdrop might not be so realistic or polished. Look at the Faces Face-swapping is one of the most common deepfake methods. Experts advise looking closely at the edges of the face. Does the facial skin tone match the rest of the head or the body? Are the edges of the face sharp or blurry? If you suspect video of a person speaking has been doctored, look at their mouth. Do their lip movements match the audio perfectly? Ajder suggests looking at the teeth. Are they clear, or are they blurry and somehow not consistent with how they look in real life? Cybersecurity company Norton says algorithms might not be sophisticated enough yet to generate individual teeth, so a lack of outlines for individual teeth could be a clue. Think About the Bigger Picture Sometimes the context matters. Take a beat to consider whether what you're seeing is plausible. The Poynter journalism website advises that if you see a public figure doing something that seems “exaggerated, unrealistic or not in character,” it could be a deepfake. For example, would the pope really be wearing a luxury puffer jacket, as depicted by a notorious fake photo? If he did, wouldn't there be additional photos or videos published by legitimate sources? Using AI to Find the Fakes Another approach is to use AI to fight AI. Microsoft has developed an authenticator tool that can analyze photos or videos to give a confidence score on whether it's been manipulated. Chipmaker Intel's FakeCatcher uses algorithms to analyze an image's pixels to determine if it's real or fake. There are tools online that promise to sniff out fakes if you upload a file or paste a link to the suspicious material. But some, like Microsoft's authenticator, are only available to selected partners and not the public. That's because researchers don't want to tip off bad actors and give them a bigger edge in the deepfake arms race. Open access to detection tools could also give people the impression they are “godlike technologies that can outsource the critical thinking for us" when instead we need to be aware of their limitations, Ajder said. The Hurdles to Finding Fakes All this being said, artificial intelligence has been advancing with breakneck speed and AI models are being trained on internet data to produce increasingly higher-quality content with fewer flaws. That means there’s no guarantee this advice will still be valid even a year from now. Experts say it might even be dangerous to put the burden on ordinary people to become digital Sherlocks because it could give them a false sense of confidence as it becomes increasingly difficult, even for trained eyes, to spot deepfakes. China is using artificial intelligence in the operation of its 45,000km (28,000-mile) high-speed rail network, with the technology achieving several milestones, according to engineers involved in the project.

An AI system in Beijing is processing vast amounts of real-time data from across the country and can alert maintenance teams of abnormal situations within 40 minutes, with an accuracy as high as 95 per cent, they said in a peer-reviewed paper. “This helps on-site teams conduct reinspections and repairs as quickly as possible,” wrote Niu Daoan, a senior engineer at the China State Railway Group’s infrastructure inspection centre, in the paper published by the academic journal China Railway. In the past year, none of China’s operational high-speed railway lines received a single warning that required speed reduction due to major track irregularity issues, while the number of minor track faults decreased by 80 per cent compared to the previous year. According to the paper, the amplitude of rail movement caused by strong winds also decreased – even on massive valley-spanning bridges – with the application of AI technology. Machine intelligence can predict and issue warnings before problems arise, enabling precise and timely maintenance that keeps the infrastructure of high-speed rail lines in better condition than when it was first built, according to the researchers. Niu and his team said the significant amount of data generated by the sensors embedded in high-speed rail infrastructure was “forcing China to adopt new technologies such as big data and artificial intelligence”. The adoption of these technologies allowed for “more precise and timely assessments and scientific evaluations of infrastructure service status”, they said. According to the paper, after years of effort Chinese railway scientists and engineers have “solved challenges” in comprehensive risk perception, equipment evaluation, and precise trend predictions in engineering, power supply and telecommunications. The result was “scientific support for achieving proactive safety prevention and precise infrastructure maintenance for high-speed railways”, the engineers said. Before construction began on China’s first high-speed rail line 15 years ago, critics argued that maintenance would become an unbearable burden as wires and rails inevitably aged. By the end of last year, the network surpassed the length of the equator, posing an engineering and technological challenge to maintain its safe operation. Across the Pacific, the ageing US railway network is facing the same issue, with lack of maintenance leading to frequent safety incidents. In the past 50 years, the average number of derailments has exceeded 2,800 per year, peaking at nearly 10,000 in 1978. China’s high-speed rail is the fastest in the world, operating at 350km/h (217mph), with plans for an increase next year to 400km/hr (249mph). The network is expected to continue its rapid expansion until it connects all cities with populations over 500,000. But Niu’s team identified a looming problem for the rail network, in the combination of rising incomes, a declining birth rate and the overall ageing of the population – the number of maintenance workers will gradually decrease compared to present levels. US billionaire Elon Musk has taken OpenAI, the artificial intelligence research company he once helped to found, to court over an alleged breach of its original mission to develop AI technology not for profit but for the benefit of humanity.

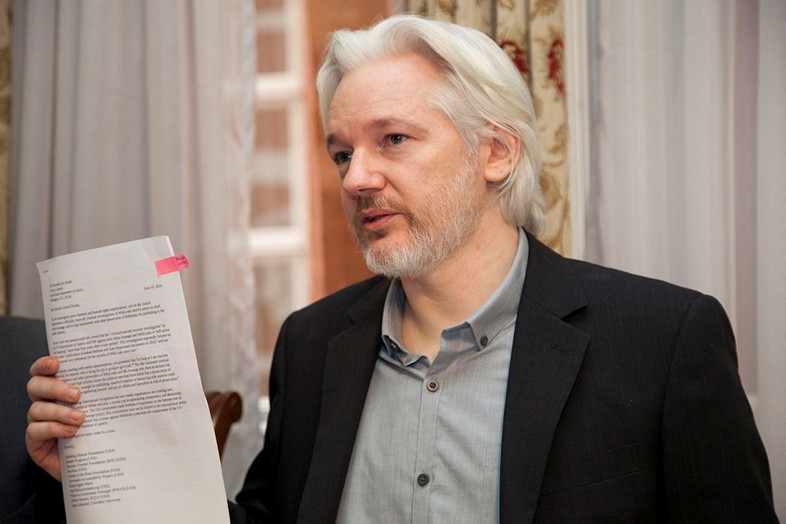

OpenAI, founded in 2015 as a non-profit research lab to develop an open-source Artificial General Intelligence (AGI), has now become a “closed-source de facto subsidiary of the largest technology company in the world,” Musk’s legal team wrote in the suit filed on Thursday in San Francisco Superior Court. The lawsuit claimed that Musk “has long recognized that AGI poses a grave threat to humanity – perhaps the greatest existential threat we face today.” “But where some like Mr. Musk see an existential threat in AGI, others see AGI as a source of profit and power,” it added. “Under its new board, it is not just developing but is actually refining an AGI to maximize profits for Microsoft, rather than for the benefit of humanity.” Musk left the OpenAI board of directors in 2018 and has since grown critical of the firm, especially after Microsoft invested at least $13 billion to obtain a 49% stake in a for-profit branch of OpenAI. “Contrary to the founding agreement, defendants have chosen to use GPT-4 not for the benefit of humanity, but as proprietary technology to maximize profits for literally the largest company in the world,” the suit read. The lawsuit listed OpenAI’s CEO Sam Altman and president Gregory Brockman as co-defendants in the case, and called for an injunction to block Microsoft from commercializing the tech. AI technology has improved at a rapid pace over the last two years, with OpenAI’s GPT language model going from powering a chatbot program in late 2022 to performing in the 90th percentile on SAT exams just four months later. More than 1,100 researchers, tech luminaries and futurists argued last year that the AI race poses “profound risks to society and humanity.” Even Altman himself has previously acknowledged that he is “a little bit scared” of the technology’s potential, and barred customers from using OpenAI to “develop or use weapons.” However, the company ignored its own ban on the use of its technology for “military and warfare” purposes and partnered up with the Pentagon, announcing in January that it was working on several artificial intelligence projects with the US military. The New York Times filed a lawsuit on Wednesday against Microsoft and OpenAI for copyright infringement, claiming their artificial intelligence (AI) platforms represent unfair competition and a menace to the free press and society.

This is the first copyright challenge from a major American media organization, according to the Times. The newspaper has asked the federal court in Manhattan to hold the defendants responsible for “billions of dollars in statutory and actual damages” for their “unlawful copying and use of The Times’s uniquely valuable works.” It has also demanded that the companies destroy any chatbot models and training data that have used the outlet’s copyrighted material. “Defendants seek to free-ride on The Times’s massive investment in its journalism,” said the complaint, accusing Microsoft and OpenAI of “using The Times’s content without payment to create products that substitute for The Times and steal audiences away from it.” Microsoft has reportedly committed to investing $13 billion into OpenAI and has already used some of its technology in its search engine, Bing. In one example cited in the lawsuit, the ChatGPT-powered Browse With Bing featured results “reproduced almost verbatim” from the Times’ product review site Wirecutter, but did not attribute the content and removed the referral links used by the newspaper to generate commissions from sales, resulting in a loss of revenue. Microsoft and OpenAI “placed particular emphasis” on using the Times’ journalism because of the “perceived reliability and accuracy of the material,” the newspaper has claimed. “If The Times and other news organizations cannot produce and protect their independent journalism, there will be a vacuum that no computer or artificial intelligence can fill,” the complaint claimed, adding, “Less journalism will be produced, and the cost to society will be enormous.” The US newspaper of record noted that it had approached OpenAI and Microsoft in April to explore “an amicable resolution” of the copyright issue, but without success. Several other media outlets have reached agreements with OpenAI for the use of their content, including the Associated Press and Axel Springer, the German owners of Politico and Business Insider. The newspaper is represented by Susman Godfrey, the same law firm that filed a proposed class action lawsuit against Microsoft and OpenAI earlier this month, and represented Dominion Voting Systems in its defamation case against Fox News related to the 2020 US presidential election. The ousted leader of ChatGPT-maker OpenAI is returning to the company that fired him late last week, culminating a days-long power struggle that shocked the tech industry and brought attention to the conflicts around how to safely build artificial intelligence.

San Francisco-based OpenAI said in a statement late Tuesday, “We have reached an agreement in principle for Sam Altman to return to OpenAI as CEO with a new initial board." The board, which replaces the one that fired Altman on Friday, will be led by former Salesforce co-CEO Bret Taylor, who also chaired Twitter's board before its takeover by Elon Musk last year. The other members will be former U.S. Treasury Secretary Larry Summers and Quora CEO Adam D’Angelo. OpenAI’s previous board of directors, which included D'Angelo, had refused to give specific reasons for why it fired Altman, leading to a weekend of internal conflict at the company and growing outside pressure from the startup's investors. The chaos also accentuated the differences between Altman — who's become the face of generative AI's rapid commercialization since ChatGPT's arrival a year ago — and members of the company's board who have expressed deep reservations about the safety risks posed by AI as it becomes more advanced. Microsoft, which has invested billions of dollars in OpenAI and has rights to its current technology, quickly moved to hire Altman on Monday, as well as another co-founder and former president, Greg Brockman, who had quit in protest after Altman's removal. That emboldened a threatened exodus of nearly all of the startup's 770 employees who signed a letter calling for the board's resignation and Altman's return. One of the four board members who participated in Altman's ouster, OpenAI co-founder and chief scientist Ilya Sutskever, later expressed regret and joined the call for the board's resignation. Microsoft in recent days had pledged to welcome all employees who wanted to follow Altman and Brockman to a new AI research unit at the software giant. Microsoft CEO Satya Nadella also made clear in a series of interviews Monday that he was still open to the possibility of Altman returning to OpenAI, so long as the startup's governance problems are solved. “We are encouraged by the changes to the OpenAI board,” Nadella posted on X late Tuesday. “We believe this is a first essential step on a path to more stable, well-informed, and effective governance.” In his own post, Altman said that “with the new board and (with) Satya's support, I'm looking forward to returning to OpenAI, and building on our strong partnership with (Microsoft)." Co-founded by Altman as a nonprofit with a mission to safely build so-called artificial general intelligence that outperforms humans and benefits humanity, OpenAI later became a for-profit business but one still run by its nonprofit board of directors. It's not clear yet if the board's structure will change with its newly appointed members. “We are collaborating to figure out the details,” OpenAI posted on X. “Thank you so much for your patience through this.” Nadella said Brockman, who was OpenAI's board chairman until Altman's firing, will also have a key role to play in ensuring the group “continues to thrive and build on its mission.” Hours earlier, Brockman returned to social media as if it were business as usual, touting a feature called ChatGPT Voice that was rolling out to users. “Give it a try — totally changes the ChatGPT experience,” Brockman wrote, flagging a post from OpenAI's main X account that featured a demonstration of the technology and playfully winking at recent turmoil. “It’s been a long night for the team and we’re hungry. How many 16-inch pizzas should I order for 778 people?” the person asks, using the number of people who work at OpenAI. ChatGPT's synthetic voice responded by recommending around 195 pizzas, ensuring everyone gets three slices. As for OpenAI's short-lived interim CEO Emmett Shear, the second interim CEO in the days since Altman's ouster, he posted on X that he was “deeply pleased by this result, after ~72 very intense hours of work.” “Coming into OpenAI, I wasn't sure what the right path would be,” wrote Shear, the former head of Twitch. “This was the pathway that maximized safety alongside doing right by all stakeholders involved. I'm glad to have been a part of the solution.” Country singers, romance novelists, video game artists and voice actors are appealing to the U.S. government for relief — as soon as possible — from the threat that artificial intelligence poses to their livelihoods. "Please regulate AI. I'm scared," wrote a podcaster concerned about his voice being replicated by AI in one of thousands of letters recently submitted to the U.S. Copyright Office. Technology companies, by contrast, are largely happy with the status quo that has enabled them to gobble up published works to make their AI systems better at mimicking what humans do. The nation's top copyright official hasn't yet taken sides. She told The Associated Press she's listening to everyone as her office weighs whether copyright reforms are needed for a new era of generative AI tools that can spit out compelling imagery, music, video and passages of text. "We've received close to 10,000 comments," said Shira Perlmutter, the U.S. register of copyrights, in an interview. "Every one of them is being read by a human being, not a computer. And I myself am reading a large part of them." What's at stake? Perlmutter directs the U.S. Copyright Office, which registered more than 480,000 copyrights last year covering millions of individual works but is increasingly being asked to register works that are AI-generated. So far, copyright claims for fully machine-generated content have been soundly rejected because copyright laws are designed to protect works of human authorship. But, Perlmutter asks, as humans feed content into AI systems and give instructions to influence what comes out, "is there a point at which there's enough human involvement in controlling the expressive elements of the output that the human can be considered to have contributed authorship?" That's one question the Copyright Office has put to the public. A bigger one — the question that's fielded thousands of comments from creative professions — is what to do about copyrighted human works that are being pulled from the internet and other sources and ingested to train AI systems, often without permission or compensation. More than 9,700 comments were sent to the Copyright Office, part of the Library of Congress, before an initial comment period closed in late October. Another round of comments is due by December 6. After that, Perlmutter's office will work to advise Congress and others on whether reforms are needed. What are artists saying? Addressing the "Ladies and Gentlemen of the US Copyright Office," the Family Ties actor and filmmaker Justine Bateman said she was disturbed that AI models were "ingesting 100 years of film" and TV in a way that could destroy the structure of the film business and replace large portions of its labor pipeline. It "appears to many of us to be the largest copyright violation in the history of the United States," Bateman wrote. "I sincerely hope you can stop this practice of thievery." Airing some of the same AI concerns that fueled this year's Hollywood strikes, television showrunner Lilla Zuckerman (Poker Face) said her industry should declare war on what is "nothing more than a plagiarism machine" before Hollywood is "coopted by greedy and craven companies who want to take human talent out of entertainment." The music industry is also threatened, said Nashville-based country songwriter Marc Beeson, who's written tunes for Carrie Underwood and Garth Brooks. Beeson said AI has potential to do good but "in some ways, it's like a gun — in the wrong hands, with no parameters in place for its use, it could do irreparable damage to one of the last true American art forms." While most commenters were individuals, their concerns were echoed by big music publishers — Universal Music Group called the way AI is trained "ravenous and poorly controlled" — as well as author groups and news organizations including The New York Times and The Associated Press. Is it fair use? What leading tech companies like Google, Microsoft and ChatGPT-maker OpenAI are telling the Copyright Office is that their training of AI models fits into the "fair use" doctrine that allows for limited uses of copyrighted materials such as for teaching, research or transforming the copyrighted work into something different. "The American AI industry is built in part on the understanding that the Copyright Act does not proscribe the use of copyrighted material to train Generative AI models," says a letter from Meta Platforms, the parent company of Facebook, Instagram and WhatsApp. The purpose of AI training is to identify patterns "across a broad body of content," not to "extract or reproduce" individual works, it added. So far, courts have largely sided with tech companies in interpreting how copyright laws should treat AI systems. In a defeat for visual artists, a federal judge in San Francisco last month dismissed much of the first big lawsuit against AI image-generators, though allowed some of the case to proceed. Most tech companies cite as precedent Google's success in beating back legal challenges to its online book library. The U.S. Supreme Court in 2016 let stand lower court rulings that rejected authors' claim that Google's digitizing of millions of books and showing snippets of them to the public amounted to copyright infringement. But that's a flawed comparison, argued former law professor and bestselling romance author Heidi Bond, who writes under the pen name Courtney Milan. Bond said she agrees that "fair use encompasses the right to learn from books," but Google Books obtained legitimate copies held by libraries and institutions, whereas many AI developers are scraping works of writing through "outright piracy." Perlmutter said this is what the Copyright Office is trying to help sort out. "Certainly, this differs in some respects from the Google situation," Perlmutter said. "Whether it differs enough to rule out the fair use defense is the question in hand."

OpenAI, the company that created ChatGPT a year ago, said Friday it had dismissed CEO Sam Altman as it no longer had confidence in his ability to lead the Microsoft-backed firm.

Altman, 38, became a tech world sensation with the release of ChatGPT, an artificial intelligence chatbot with unprecedented capabilities, churning out human-level content like poems or artwork in just seconds. OpenAI's board said in a statement that Altman's departure "follows a deliberative review process," which concluded "he was not consistently candid in his communications with the board, hindering its ability to exercise its responsibilities." "The board no longer has confidence in his ability to continue leading OpenAI," it added. Altman's decision last year to release the app paid off in ways he never imagined, catapulting the Missouri-born Stanford dropout to household name stardom. The launch of ChatGPT ignited an AI race, with contenders including tech giants Amazon, Google, Microsoft and Meta. Microsoft has invested billions of dollars in OpenAI and has woven the company's technology into its offerings, including search engine Bing. Altman has testified before US Congress about AI and spoken with heads of state about the technology, as pressure ramps up to regulate against risks such as AI's potential use in bioweapons, misinformation and other threats. The statement said the board was "grateful for Sam's many contributions to the founding and growth of OpenAI. At the same time, we believe new leadership is necessary as we move forward." Altman would be replaced on an interim basis by Mira Murati, the company's chief technology officer, the statement said. OpenAI's board of directors consists of OpenAI chief scientist Ilya Sutskever, Quora CEO Adam D'Angelo, technology entrepreneur Tasha McCauley, and Georgetown Center for Security and Emerging Technology's Helen Toner. Altman earlier this month led a major developer's conference for OpenAI, announcing a new set of products that were largely met positively in Silicon Valley. The young CEO on Thursday told AFP he understood some of the worries when it came to how people feel about AI and its disruptive powers. "(I have) lots of empathy for why anyone would feel, however they feel, about this," he told AFP of the platform that is credited with launching the revolution in generative artificial intelligence (AI). Altman was speaking on the sidelines of the annual Asia-Pacific Economic Cooperation (APEC) summit in San Francisco where he was swarmed by fans after his appearance, many of whom wanted to take selfies with him.

According to EU code, tech platforms like Twitter are connected with “fact-checkers, civil society, and third-party organizations with specific expertise on disinformation.” In other words, avid gatekeepers of the establishment narrative. And on August 25th, adherence will no longer be voluntary.

The EU should consider getting out of the control freak business if it truly wants to help the European free press. Maybe then, journalists here in Europe trying our best to fully inform our audiences against information barriers created by Brussels won’t have to redirect our internet connections to places like Vietnam, Mexico, Turkey, or Brazil in order to access information and sources that the EU doesn’t like. US Vice President Kamala Harris has been appointed to head a new artificial intelligence initiative in partnership with leading companies in the field, the White House announced in a press release on Thursday. Harris and other senior officials in the administration of President Joe Biden will meet with the CEOs of Alphabet, Anthropic, Microsoft, and OpenAI to remind them of their “responsibility to make sure their products are safe before they are deployed or made public.” The meeting is intended to keep the companies on track toward “driving responsible, trustworthy and ethical innovation with safeguards that mitigate risks and potential harms to individuals and our society,” according to the White House, which referenced recent executive orders and official statements reminding tech companies that their products were subject to civil rights law and other protections against unlawful discrimination.

The four companies, along with Hugging Face, NVIDIA, and Stability AI, will also submit to a public evaluation of their capabilities by thousands of industry experts and other curious members of the public at DEFCON 31, the Las Vegas hacking convention that has repeatedly put the insecurity of the US’ voting machines on display by giving children a chance to hack them. The White House also announced the creation of seven new National AI Research Institutes focusing on climate, agriculture, energy, public health, education, and cybersecurity, explaining the new institutes would “support the development of a diverse AI workforce” with $140 million in funding from the National Science Foundation. The administration is also giving the public the chance to weigh in on government AI policy starting this summer, according to the press release. Tasked with stemming the flow of migrants over the US’ southern border upon taking office in 2021, Harris instead presided over a record amount of illegal immigration, earning her the lowest approval rating of any modern US vice president. Last year, she was assigned with developing a blueprint for fighting “disinformation,” harassment and abuse online despite having no experience in the technology sector. While hundreds of experts in the AI field have called for a moratorium on, or at least a dramatic slowdown of, AI development until internationally agreed-upon safety measures can be put in place, the US has thus far shied away from issuing any strong statements about the technology. Last month, Biden met with his Council of Advisors on Science and Technology to discuss the “risks and opportunities” in the field but declined to address the experts’ warnings while admitting AI “could be” dangerous. US House and Senate lawmakers have raised alarm bells about the potential use of artificial intelligence in America’s nuclear arsenal, arguing that the technology must not be put in a position to fire off warheads on its own. A group of three Democrats and one Republican introduced a bill that calls for banning AI from being used in a way that could lead to it launching nuclear weapons. If enacted, the legislation would codify a current Pentagon policy that requires a human to be “in the loop” on any launch decisions.

“We want to make sure there’s a human in the process of launching a nuclear weapon if, at any point time, we need to launch a nuclear weapon,” US Representative Ken Buck, a Colorado Republican, said on Friday in a Fox News interview. “So you see sci-fi movies, and the world is out of control because AI has taken over – we’re going to have humans in this process.” Buck alluded to Hollywood’s portrayal of a nightmare scenario in which AI systems gain control of nuclear weapons, as depicted in such films as ‘WarGames’ and ‘Colossus: The Forbidden Project.’ He warned that the use of AI without a human chain of command would be “reckless” and “dangerous.” Representative Ted Lieu agreed, saying, “AI is amazing. It’s going to help society in many different ways. It can also kill us.” Lieu, a California Democrat, is a lead backer of the AI legislation, along with two other Democrats – Representative Don Beyer of Virginia and Senator Edward Markey of Massachusetts. Although the idea of an AI-instigated nuclear war might have been dismissed in the past as science fiction, many scientists believe that it’s no longer a far-fetched risk. A poll released earlier this month by the Stanford Institute for Human-Centered Artificial Intelligence found that 36% of AI researchers agreed that the technology could cause a “nuclear-level catastrophe.” US Central Command (CENTCOM) last week announced the hiring of a former Google executive as its first-ever AI adviser. The Pentagon has asked Congress for $1.8 billion in funding for AI research in its next fiscal year. After a song featuring the AI-generated voices of the rappers Drake and The Weeknd went viral on Monday, the world’s biggest record label demanded a reckoning from streaming platforms. The Netherlands-based Universal Music Group (UMG), which represents both artists, has already tried to block artificial intelligence programs from accessing its catalog, but that appears to be easier said than done. A song titled ‘heart on my sleeve’ clocked more than 15 million plays on TikTok, 625,000 on Spotify and over 230,000 on YouTube in just a few hours, before the platforms moved to take it down for copyright infringement. Drake and The Weeknd are both represented by Republic Records, a subsidiary of UMG. The Dutch-based label is the world’s largest, with a market share greater than all independents combined. After the incident, UMG issued a statement insisting that having AI generate music from their artists’ catalog “begs the question as to which side of history all stakeholders in the music ecosystem want to be on: the side of artists, fans and human creative expression, or on the side of deep fakes, fraud and denying artists their due compensation.” Platforms “have a fundamental legal and ethical responsibility to prevent the use of their services in ways that harm artists,” the label added.

The company has “a moral and commercial responsibility to our artists to work to prevent the unauthorized use of their music,” a spokesman told CNN on Tuesday. “I’m not sure how effective this will be as AI services will likely still be able to access the copyrighted material one way or another,” Karl Fowlkes, an entertainment and business attorney in New York, told CNN. Fowlkes argued that the government should “explicitly ban” AI companies from using copyrighted work to train their models. Copyright is intended to protect original art, “not works created by machines that used the original art to create new work,” he said.

The US Copyright Office issued new guidance in March saying that it will decide on a case-by-case basis whether AI-generated work can be copyrighted, explaining that this depends on whether something is merely a “mechanical reproduction” or the result of an author’s “own original mental conception, to which [the author] gave visible form.” DJ and producer David Guetta demonstrated in February how easy it was to create new music using two AI programs, ChatGPT for lyrics and Uberduck for vocals. After just an hour, he had a rap song that sounded like the work of Eminem. Guetta played it at one of his shows, but said he would never release it commercially. “That is an ethical problem that needs to be addressed because it sounds crazy to me that today I can type lyrics and it’s going to sound like Drake is rapping it, or Eminem,” he said at the time. Ukraine is now using Clearview AI's facial recognition technology for purposes such as identifying Russian soldiers, its CEO claimed. Hoan Ton-That told Reuters the company offered Ukraine's defense ministry free access to its system following the invasion by Russia. According to the report, Clearview suggested Ukraine could use the tech to reunite refugees with family members, fight misinformation, assess at checkpoints whether someone is a person of interest and to identify dead bodies. The company hasn't offered its technology to Russia. Engadget has contacted the defense ministry for comment. Ukraine officials previously suggested they were considering using the tech.

It's not clear exactly what Ukraine is using the system for, Ton-That said, while noting it shouldn't be used as the sole means of identification. He and Clearview advisor Lee Wolosky claimed other Ukraine government agencies plan to start using the tech over the coming days. Ton-That said Clearview has access to more than 2 billion photos from VKontakte, the Russian social media service, and more than 10 billion images overall in its database. Clearview's controversial tech has come under fire from many quarters over the last few years. This month, Italy fined the company €20 million ($27.9 million) and ordered it to delete images of Italian nationals. The UK provisionally fined Clearview £17 million ($22.6 million) in November for breaking data protection laws. Canada, Australia and France are among the countries that have told Clearview to delete images of its residents and citizens. It's also facing privacy lawsuits in the US, where lawmakers have urged federal agencies to stop using the tech. Meta, Google, Venmo, Twitter and other platforms have demanded that Clearview stop scraping images from them as well. |

Thank you for choosing to make a difference through your donation. We appreciate your support.

This website uses marketing and tracking technologies. Opting out of this will opt you out of all cookies, except for those needed to run the website. Note that some products may not work as well without tracking cookies. Opt Out of CookiesCategories

All

Archives

April 2024

|

RSS Feed

RSS Feed